Given n feature vectors x 1= (x 11,…,x 1p ), ..., x n = (x n1,…,x np ) of size p and a vector of class labels y = (y 1,…,y n ), where y i ∈ {-1,1} describes the class to which the feature vector x i belongs, the problem is to build a two-class Support Vector Machine (SVM) classifier.

Training Stage

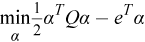

The SVM model is trained using the Boser method [Boser92] reduced to the solution of the quadratic optimization problem

with 0 ≤ α i ≤ C, i = 1, ... , n, y T α = 0,

where e is the vector of ones, C is the upper bound of the coordinates of the vector α, Q is a symmetric matrix of size n x n with Q ij =y i y j K(x i ,x j ), and K(x,y) is a kernel function.

Working subset of α updated on each iteration of the algorithm is based on the Working Set Selection (WSS) 3 scheme [Fan05]. The scheme can be optimized using one of these techniques or both:

-

Cache.

The implementation can allocate a predefined amount of memory to store intermediate results of the kernel computation.

-

Shrinking.

The implementation can try to decrease the amount of kernel related computations (see [Joachims99]).

The solution of the problem defines the separating hyperplane and corresponding decision function D(x)= ∑ k y k α k K(x k ,x) + b where only those x k that correspond to non-zero α k appear in the sum, and b is a bias. Each non-zero α k is called a classification coefficient and the corresponding x k is called a support vector.

Prediction Stage

Given the SVM classifier and r feature vectors x 1,…,x r , the problem is to calculate the signed value of the decision function D(x i ), i=1, ... , r. The sign of the value defines the class of the feature vector, and the absolute value of the function is a multiple of the distance between the feature vector and separating hyperplane.

|

Intel's compilers may or may not optimize to the same degree for non-Intel microprocessors for optimizations that are not unique to Intel microprocessors. These optimizations include SSE2, SSE3, and SSSE3 instruction sets and other optimizations. Intel does not guarantee the availability, functionality, or effectiveness of any optimization on microprocessors not manufactured by Intel. Microprocessor-dependent optimizations in this product are intended for use with Intel microprocessors. Certain optimizations not specific to Intel microarchitecture are reserved for Intel microprocessors. Please refer to the applicable product User and Reference Guides for more information regarding the specific instruction sets covered by this notice. Notice revision #20110804 |